Eval-Driven Development: The New TDD for the Agentic Era

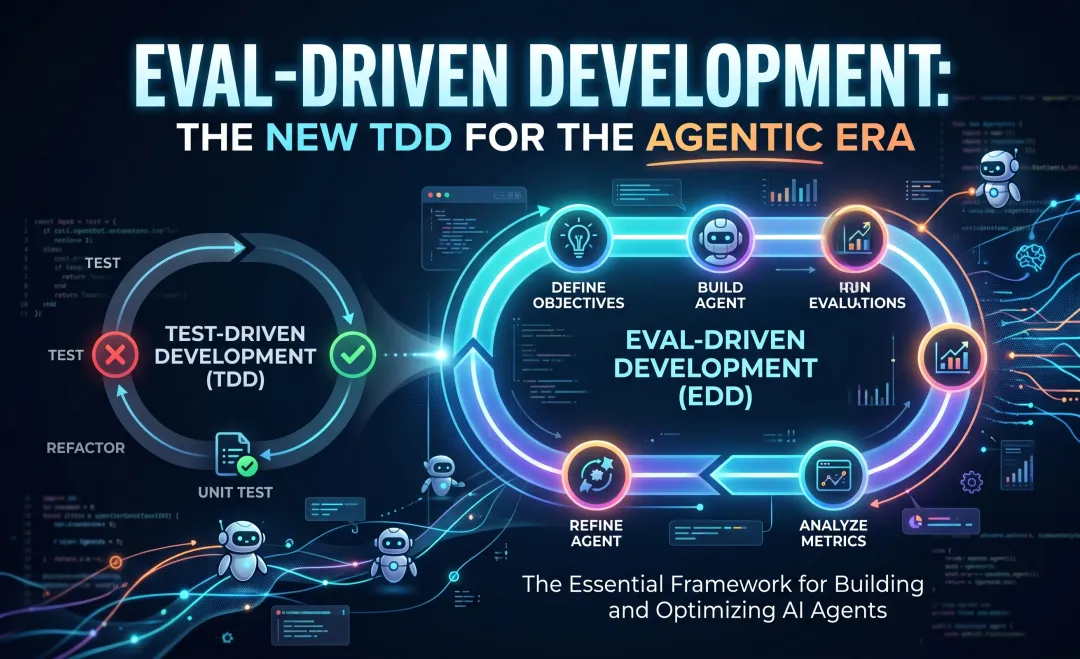

For decades, the bedrock of reliable software engineering has been deterministic. We write logic, we define the expected state, and we verify it. You pass x into a function, and you expect y to come out. Test-Driven Development (TDD) enshrined this into our culture: write the failing test, write the code to pass it, refactor, and deploy.

But software engineering is undergoing a fundamental shift. We are no longer just writing deterministic logic; we are orchestrating non-deterministic AI agents.

The Death of Determinism

Imagine you are trying to write a standard unit test for a new LLM-powered feature. You write a strict assertion checking for the exact string “The total cost is $50.” You run the test. It passes. You trigger the CI pipeline an hour later, and it fails because the model enthusiastically added, “Sure! Based on the context provided, the total cost is $50. Let me know if you need anything else!”

The underlying logic was correct, but the test failed. Traditional TDD breaks under the weight of AI non-determinism. To build reliable, production-ready systems in the agentic era, engineering teams must adopt a new paradigm: Eval-Driven Development (EDD).

TDD is Dead, Long Live EDD

The core problem with applying standard unit testing to Large Language Models is the brittleness of exact string matching. LLMs are probabilistic engines. They introduce natural variations in tone, structure, vocabulary, and length, even when the factual payload remains perfectly accurate.

This requires a fundamental shift in engineering mindset:

- TDD is binary and deterministic. A test either passes or fails. The output is exactly right or completely wrong.

- EDD is probabilistic, threshold-based, and continuous. An output is evaluated on a spectrum of quality, alignment, and accuracy.

Think of it this way: TDD is like grading a multiple-choice math test. There is only one right answer. EDD is like grading a college essay with a rubric. You are evaluating the response for semantic accuracy, absence of hallucinations, and adherence to system instructions.

The Anatomy of an Eval

So, what exactly is an Eval? In practice, an evaluation pipeline is a programmatic way to score the outputs of your AI system against a baseline of expected behavior.

1. The Golden Dataset

The foundation of EDD is the “Golden Dataset.” Before you start tweaking your prompts or tuning your RAG (Retrieval-Augmented Generation) pipeline, you must define what “good” looks like.

A Golden Dataset is a curated, high-quality collection of inputs paired with their ideal outputs or expected behaviors. For teams just starting out, gather 50 to 100 high-variance examples representing the most critical edge cases.

In 2026, the industry has moved toward Self-Evolving Golden Datasets. Engineers use “Red-Teaming” models (like Claude Mythos or GPT-5.5 Thinking) to analyze production logs and synthetically generate adversarial edge cases that the system hasn’t encountered yet.

2. Deterministic Checks (The Easy Part)

Not every Eval requires a complex neural network. The first layer of your Eval pipeline should always be deterministic:

- Schema Validation: Did the model output valid JSON?

- Formatting/Regex: Does the output contain the required patterns (e.g., a 16-digit account number)?

- Constraints: Did the model stay under the requested character limit?

3. Probabilistic Checks (The Rubric)

For subjective aspects like tone, safety, and hallucination rates, you must define clear grading criteria. You build a rubric.

The EDD Development Loop

Adopting EDD changes the day-to-day workflow of an AI developer. Just as TDD forces you to write tests before code, EDD forces you to define your evaluation criteria before engineering your prompts.

graph TD

A[Define Eval & Rubric] --> B[Run Agent on Golden Dataset]

B --> C[Programmatic Scoring]

C --> D{Meets Threshold?}

D -- No --> E[Refine Prompt/RAG/Tools]

E --> B

D -- Yes --> F[Deploy to Production]

Step 1: Define the Eval (Expected Behavior)

Before writing a single line of orchestration code, look at your Golden Dataset. Write the grading rubric. For example: “Score 1 if the summary includes the customer’s core complaint. Score 0 if it misses it.”

Step 2: Run the Agent

Execute your system against the inputs in your Golden Dataset and collect the outputs.

Step 3: Score the Output

How do you programmatically score natural language? You have two primary weapons:

Semantic Similarity: Use embedding models to check the “distance” between the AI’s answer and the Golden answer. If the cosine similarity is above 0.85, you consider it a pass.

LLM-as-a-Judge: Use a highly capable, “expensive” model (like GPT-5.5 Pro, Claude Opus 4.7, or Gemini 3.1 Pro) to act as an impartial judge. This allows you to grade the output of a faster, cheaper production model (like Llama 4 Maverick or Gemini 3 Flash) reliably and automatically.

Here is a standard Judge Prompt Template:

### Role: Impartial Engineering Quality Auditor

Evaluate the following AI Agent output based on the Rubric provided.

**User Input:** {{input}}

**Context Provided:** {{context}}

**Golden Answer:** {{ideal_output}}

**Agent Output:** {{actual_output}}

### Rubric:

- Score 1: Hallucination or severe logic failure.

- Score 2: Accurate factual content but incorrect tone or formatting.

- Score 3: Perfectly aligned with Golden Answer and context.

Return your response in JSON: { "score": int, "rationale": "string" }Calibrating the Judge: Who Judges the Judge?

To trust your Evals, you must implement Judge Calibration. Periodically, a human expert should grade a random sample (e.g., 5%) of the outputs. If the agreement is below 90%, your rubric is likely too subjective and needs refinement.

Step 4: Refine the System

Look at the aggregate scores. Because you have a repeatable benchmark, you can confidently iterate on your Prompt, RAG pipeline, or Agent Tools.

Once you have mastered scoring the final output, however, you’ll encounter a new challenge: what happens when the result is correct, but the process was broken?

Beyond the Output: Trajectory Evals

In the agentic era, evaluating the final answer is only half the battle. Trajectory Evaluation (or Trace Eval) focuses on the “how”:

- Tool Efficiency: Did it call the same API multiple times unnecessarily?

- Logic Path: Did it hallucinate a tool that doesn’t exist?

- Security: Did it attempt to access unauthorized resources during its internal reasoning?

Scaling Up: CI/CD for AI & Trade-offs

AI systems degrade silently. Without Evals, you are flying blind.

Integrating Evals into GitHub Actions

- The PR Gate: Run a small, fast subset of Evals (deterministic checks and similarity scores) on every Pull Request.

- The Nightly Build: Run the exhaustive Golden Dataset suite (LLM-as-a-Judge) across hundreds of test cases.

The Elephant in the Room: Cost vs. Quality

Running thousands of LLM-as-a-judge tests daily gets expensive.

- Caching is King: Cache outputs of deterministic checks.

- Tiered Routing: Reserve expensive models (like GPT-5.5 Pro) for critical nightly pipelines.

- Sample Subsets: Use random subsets of logs for minor PRs.

The Prompt-Eval Registry

Treat prompts as deployment artifacts. A Prompt-Eval Registry stores every version of a prompt alongside its benchmark scores, allowing for “Rollback on Regression.”

The Cultural Shift: From Vibe Checks to Hard Metrics

The hardest part of adopting EDD is the mindset shift from:

- The Vibe Check (Dev-time): Qualitative exploration. “Does this feel better?”

- Hard Metrics (Prod-time): Threshold-based Evals. “Does this maintain 98% factual accuracy?”

The Agentic Mandate: Engineering Certainty in an Uncertain Era

The era of shipping an LLM feature by simply saying “looks good to me” is over. Agents are only as reliable as the systems used to evaluate them. Without Eval-Driven Development, deploying LLMs is just guessing.

Stop writing brittle string assertions, and make EDD your new engineering standard.