Engineering the Prompt Lifecycle: Decoupling LLM Logic from Application Code

Building an AI wrapper is easy. Any junior developer can wrap a fetch call around an OpenAI endpoint, inject a few variables into a string, and call it a feature. But maintaining that feature in production, where models drift, instructions conflict, and non-technical stakeholders need to tweak copy without a two-week sprint cycle, is where the real engineering nightmare begins.

The industry is reaching a critical inflection point. We are realizing that prompts are no longer just strings of text; they are core software assets that drive critical application logic. Yet, most teams are still treating them like 2010-era configuration variables.

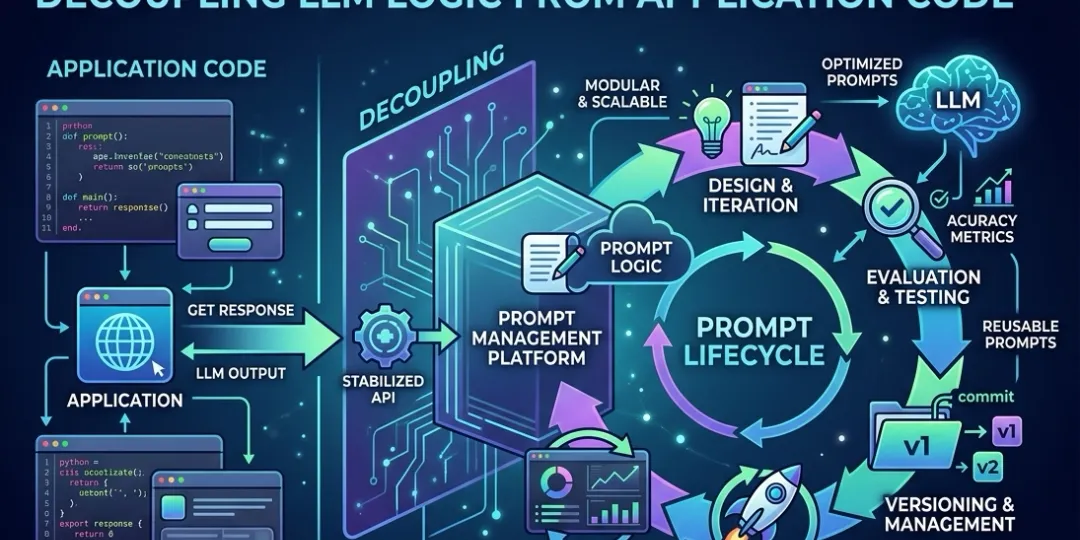

To scale AI development, we must treat prompts like code. This requires a fundamental architectural shift: the adoption of a Prompt Registry.

The “Before” State: The Chaos of Unmanaged Prompts

If your prompts live inside your source code, you are likely suffering from three primary bottlenecks:

1. The Hardcoding Nightmare

When prompts are buried deep within your application logic, they become invisible and immutable to anyone but the developer. Changing a single sentence in a system instruction requires a full CI/CD cycle: a branch, a pull request, code review, and a deployment. This is an unacceptable lag for an asset that often needs hourly refinement.

2. The Collaboration Bottleneck

The domain experts, such as the Product Managers, Subject Matter Experts (SMEs), and dedicated Prompt Engineers, are often the ones who should be writing and refining the prompts. Forcing them to navigate GitHub, learn Markdown, and submit PRs is a recipe for friction. It turns your highly-paid engineers into human “copy-paste” proxies.

3. The Observability Void

When a model hallucinates in production, how do you know which version of the prompt triggered it? Without a dedicated registry, tracing a specific LLM output back to its exact instruction set is nearly impossible, especially when prompts are dynamically constructed with complex logic.

What Exactly is a Prompt Registry?

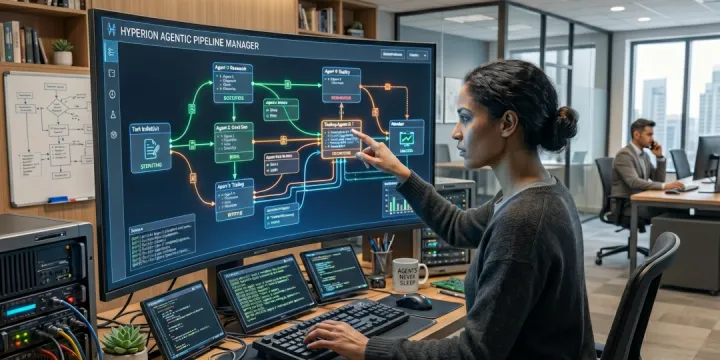

A Prompt Registry is a centralized repository designed to store, manage, and serve prompts as independent artifacts, decoupled from the main application code.

Think of it as the Docker Container Registry for your AI infrastructure or a Headless CMS for your LLM instructions. Your application code handles the routing and the orchestration, while the registry handles the content and the versioning.

The Architecture of Decoupled Prompts

In a modern LLMOps stack, the application does not “know” the prompt. It only knows the Prompt ID and the Variables it needs to inject.

graph LR

subgraph "Registry (Source of Truth)"

R1[Prompt: 'Support Bot']

R2[Version: v1.2.4]

R3[Status: Production]

end

subgraph "Application Code"

A1[Request Task]

A2[Fetch Latest 'Support Bot']

A3[Inject Variables]

end

subgraph "LLM Provider"

L1[GPT-5.5 Pro / Claude Opus 4.7]

end

A1 --> A2

A2 -- Get v1.2.4 --> R2

A2 --> A3

A3 -- Prompt + Data --> L1

L1 -- Response --> A1

Core Features of a Robust Registry

A production-grade Prompt Registry is more than just a database of strings. It must support the following engineering requirements:

1. Version Control & Audit Logs

Every change to a prompt must be versioned. You should be able to roll back a prompt in seconds if a new version causes a spike in latency or an increase in hallucination rates. Git-style audit logs provide a clear paper trail of who changed what and why.

2. Dynamic Templating

Prompts are rarely static. A registry should support industry-standard templating (like Jinja2 or Handlebars) to allow for variable injection:

// Example: summary-bot/v2

"instruction": "Summarize the following {{language}} text in exactly {{word_count}} words: {{text}}"3. API-First Delivery

The application should fetch the latest “production-tagged” prompt at runtime via a REST API or a lightweight SDK. This allows for instant updates without redeploying the application binary.

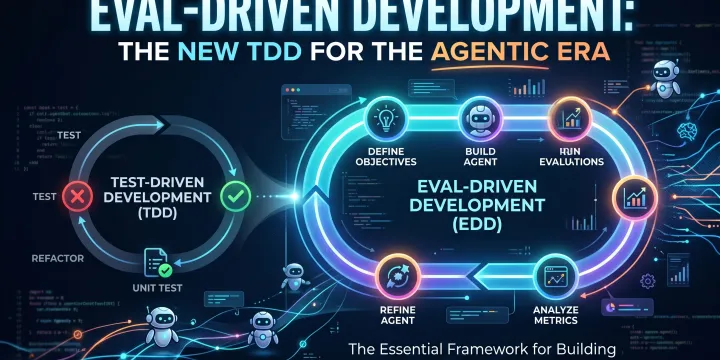

4. A/B Testing & Evaluation (The “Holy Grail”)

The best registries don’t just store prompts; they evaluate them. They allow you to run two versions of a prompt side-by-side (A/B testing) and measure which one yields better accuracy, lower costs, or faster response times.

The Benefits: Strategic and Technical ROI

Faster Iteration (TTM)

By decoupling prompts from code, non-technical team members can iterate on instructions in real-time. This reduces the “Idea-to-Production” loop for AI features from days to minutes.

Cost & Latency Optimization

A registry makes it trivial to swap models. Want to test if Gemini 3.1 Flash can handle a summarization task currently performed by GPT-5.5 Pro? Update the model ID in the registry, run a few evals, and flip the switch: zero code changes required.

Security & Compliance

In regulated industries (FinTech, HealthTech), providing a definitive record of the instructions given to an AI is a legal requirement. A registry provides this “instructional provenance” out of the box.

Tool Landscape: The 2026 Market

The LLMOps ecosystem has matured. Choosing a tool depends on your team’s specific pain points:

| Tool | Best For… | Key Differentiator |

|---|---|---|

| Braintrust | Enterprise evaluation | Best-in-class environment management and data-driven testing. |

| LangSmith | LangChain users | Deep integration with the LangChain ecosystem and massive community Hub. |

| PromptLayer | Collaboration | The premier no-code visual editor for non-technical stakeholders. |

| Langfuse | Open-source purists | Powerful open-source alternative with full-stack observability. |

| Vellum | Complex Agents | Visual playground for side-by-side prompt-model combinations. |

| PromptHub | Dev-centric teams | Brings true Git-style mechanics (branching, PRs) to a REST API. |

Best Practices for Implementation

- Strict Naming Taxonomy: Use a consistent naming convention:

[service]/[task]/[version]. (e.g.,billing/invoice-summarizer/v3). - The Decoupling Rule: Your application code should never contain hardcoded strings longer than a few words. If it’s an instruction, it belongs in the registry.

- CI/CD for Prompts: Integrate your registry into your CI/CD pipeline. Before a prompt is tagged as

production, it must pass a battery of Evals (deterministic and probabilistic) to ensure no regressions.

Conclusion

In the era of agentic workflows, prompts are the new source code. Treating them as second-class citizens, hardcoded, unversioned, and buried in your application, is a recipe for technical debt and operational gridlock.

A Prompt Registry is not just a tool; it is a fundamental architectural requirement for any team serious about building reliable AI at scale. Stop hardcoding your strings, start versioning your intelligence, and treat your prompts with the engineering rigor they deserve.

The future of AI isn’t just about better models; it’s about better management of those models.